In the context of network meta-analysis, indirect treatment comparison is used to describe the analysis of networks that do not contain any loops

What is network meta-analysis?

To begin with, we will assume that most of our readers are familiar with systematic review and standard pairwise meta-analysis. For any readers who are new to these topics, the Cochrane Handbook provides an excellent guide. A standard pairwise meta-analysis can compare the efficacy or safety of exactly two treatments that have been directly compared in head-to-head clinical trials. However, in practice, there are often many potential treatments for a single disease. Policy-makers, physicians and patients need to be able to select the best treatment from amongst the many potential options. Network meta-analysis is an extension of standard pairwise meta-analysis that can be used to simultaneously compare any number of treatments.

In the simplest possible example of a network meta-analysis, there are two treatments of interest that have been directly compared to a common comparator, but not to each other. Usually the common comparator will be placebo or a standard treatment. This situation is illustrated by Figure 1A. The circles indicate treatments and the lines connect treatments that have been directly compared in head-to-head clinical trials. In this example, treatments A and C have both been directly compared to treatment B, but there are no trials that compare treatments A and C.

There is no limit to the number of treatments, trials and patients that can be included in a network meta-analysis. However, in order to conduct a standard network meta-analysis, the treatments should form a connected network, such that there is path from each treatment to every other treatment in the network. In Figure 1, diagrams A, B and C all illustrate connected networks. However, diagram D illustrates a disconnected network – there are no trials that connect treatments E and F to the rest of the network. We will look at how to handle disconnected networks in a future blog post.

Standard terminology

You may be familiar with other network meta-analysis terms, such as indirect treatment comparison and mixed treatment comparison. Different groups have used these terms in different ways, but, in this blog, we will follow the definitions proposed by the International Society for Pharmacoeconomics and Outcomes Research (ISPOR) Task Force on Indirect Treatment Comparisons (1).

Following the ISPOR task force definitions, ‘indirect treatment comparison’ and ‘mixed treatment comparison’ are both types of network meta-analysis. Indirect treatment comparison is used to describe the analysis of networks that do not contain any loops; mixed treatment comparison is used to described the analysis of networks that do contain loops. In Figure 1, diagrams A and C both illustrate indirect treatment comparisons, and diagram B illustrates a mixed treatment comparison. In diagram B, treatments A and B can be compared using direct evidence from AB trials, or indirect evidence via the BC and AC trials – hence, overall, the evidence for A versus B is a mix of the direct and indirect evidence.

A short history of network meta-analysis

A simple method for indirect treatment comparison was first introduced by Bucher et al. in 1997 (2); Bayesian methods suitable for indirect treatment comparison or mixed treatment comparison were published by Lu and Ades in 2004 (3). Since then, the use of network meta-analysis has risen steadily (4) and many health technology assessment agencies now accept network meta-analysis, including England’s National Institute of Health and Care Excellence (NICE).

Statistical methods for network meta-analysis are continually being developed and Quantics can provide advice on the best choice of methodology for both simple and complex networks. Our recent work includes using a three-level hierarchical network meta-analysis model to account for different doses and classes of drugs. This project included over 50 studies and 30 treatments and we applied network meta-analysis to several different outcomes. For another recent project, data was available from several different time points. We used a longitudinal network meta-analysis model to simultaneously synthesize all of the data and provide estimates of efficacy at different time points.

Further information

We hope you’ve found our first post informative. If there are any particular topics you’d like to see covered in the future then please let us know and make sure you have a look at our publications, presentations and posters which you can find here.

If you are looking for an in depth intro to network meta-analysis YHEC and Quantics run joint courses – currently available in-house, on request,

Find out more about our joint training program with YHEC

Read our second post covering the key assumptions of network meta-analysis below

Like all statistical analyses, network meta-analysis relies on a specific set of assumptions. In this second post, we’ll look at the key assumptions of network meta-analysis. In order to draw valid conclusions about the relative efficacy and safety of treatments in a network meta-analysis and facilitate good decision making, it is important to understand and evaluate these assumptions.

Network meta-analysis is simply an extension to ordinary pairwise meta-analysis, and hence the assumptions of network meta-analysis are very similar to the assumptions of meta-analysis. The validity of both meta-analyses and network meta-analyses is dependent upon:

- the adequacy of the evidence base and

- the similarity of the trials.

Adequacy of the evidence base

The adequacy of the evidence base depends on both internal and external factors. Internally, when developing a network meta-analysis, you should use a systematic review to identify the relevant studies. Using a systematic approach ensures that there is no bias in the selection of the studies. Guidelines for health technology assessment submissions and network meta-analysis publications (e.g. the NICE Guide to the Methods of Technology Appraisal (1) and the PRISMA extension statement for network meta-analysis (2)) all require that network meta-analyses are based on a systematic review.

Externally, the adequacy of the evidence base depends upon the quality of the relevant studies and whether they have been fully published. Meta-analysis and network meta-analysis are only as good as the studies they are based on. For individual studies, important considerations include how patients were randomized to the treatments, whether both patients and outcome assessors were blind to the treatment and how missing data were handled. The Cochrane risk of bias tool (3) is commonly used to assess the quality of studies. If some studies have a high risk of bias, then sensitivity analysis with and without these studies is recommended.

In meta-analysis and network meta-analysis, publication bias is also a key concern. Publication bias arises when positive results are more likely to be published than negative results. Publication bias can occur both at the study level, when entire studies are left unpublished, or at the outcome level, when results are only partially published. Methods for assessing publication bias in meta-analysis are well established (e.g. funnel plots (4)), and where there is sufficient data, these can be applied to individual pairwise comparisons within a network. Alternatively, methods for assessing publication bias across entire networks, have also been proposed (5, 6).

Similarity of the trials

In both meta-analysis and network meta-analysis it is important to consider the similarity of the trials. The key assumption is that the trials shouldn’t differ in any characteristics that may impact the treatment effect. Here, it is important to differentiate between treatment responses and treatment effects. We define a treatment response, as how patients react to an individual treatment, and a treatment effect as the difference in response between two treatments. To illustrate this, consider a trial that compares two treatments for tiredness – tea and coffee.

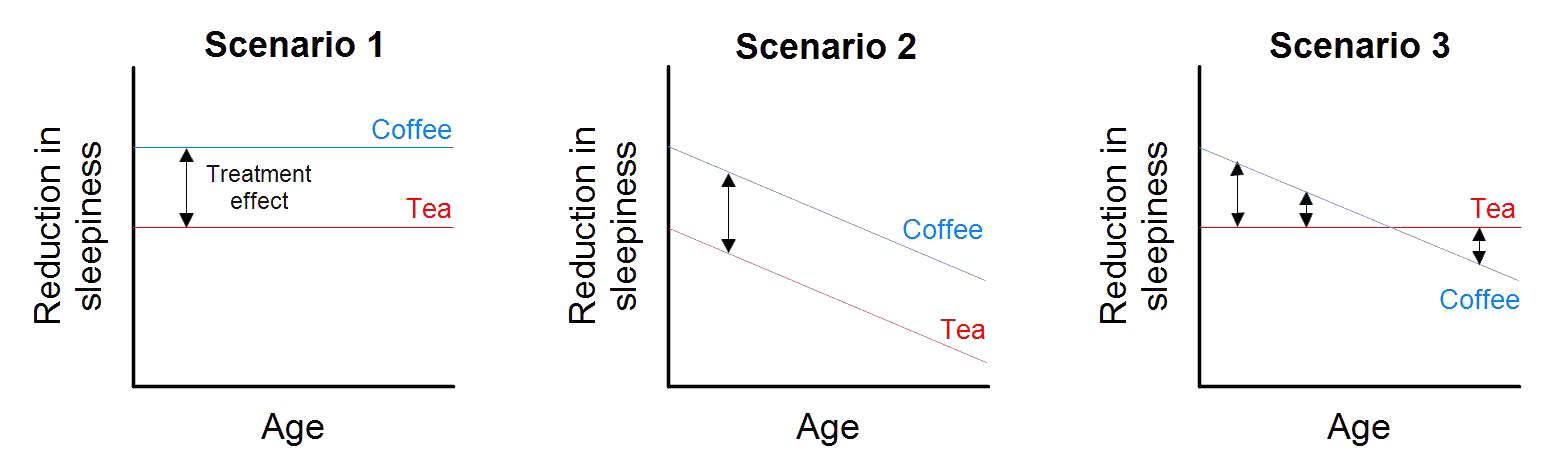

Figure 1: Treatment responses and treatment effects.

Figure 1 illustrates three scenarios for the relationship between the treatments (tea and coffee), the outcome (reduction in tiredness) and age. In Scenario 1, age doesn’t affect treatment response for either tea or coffee, hence there is also no impact on the treatment effect. In Scenario 2, age reduces the response to both tea and coffee, but note that the relationship is the same for each treatment, and hence the treatment effect is constant. In Scenario 3, age again reduces the response to both tea coffee, but note that in this case, age has a greater impact on coffee. In this case, the treatment effect depends on age. For Scenarios 1 and 2, we would say that age is not a treatment effect modifier. In each of these cases, it would be appropriate to conduct a meta-analysis that combines studies of young patients with studies of old patients. For Scenario 3, we would say that age is a treatment effect modifier – studies of young patients indicate that coffee is more effective, but studies of old patients indicate that tea is more effective. It would not be appropriate to conduct a meta-analysis that combines studies of young patients with studies of old patients (a better approach would be to use a meta-regression, but that’s a topic for another post!).

Sign up to make sure you are alerted to new content

Both study design characteristics and patient characteristics are important. Following on with our example, key study design characteristics may include the location and setting of the trial (Italy or America, at home or at work), the formulation of the treatment (instant coffee or an espresso), and the time points when key outcomes were measured (15 minutes post treatment or 2 hours post treatment). Key patient characteristics might include whether concomitant medications are allowed (e.g. Red Bull) and the severity of the condition (common Monday morning tiredness or new parent tiredness).

In network meta-analysis, we want all of the trials in the network to be similar with respect to any characteristics that are potential treatment effect modifiers. This assumption can be broken down by looking separately at each of homogeneity, transitivity (similarity) and consistency. In order to illustrate these concepts we expand our tiredness example to include a control, decaffeinated tea or coffee. Figure 2 provides a network diagram – it shows that, as well as coffee versus tea trials, there are also trials of coffee versus decaf (coffee), and tea versus decaf (tea).

Figure 2: Network diagram

Homogeneity refers to the equivalence of trials within each pairwise comparison in the network. Hence we can examine homogeneity separately for the coffee versus tea trials, the coffee versus decaf trials and the tea versus decaf trials. For each comparison, we can assess the degree of heterogeneity qualitatively by reviewing the trial characteristics, but also quantitatively by comparing the treatment effects estimated by each trial. The I-squared statistic (7) is commonly used to quantify the degree of heterogeneity within a pairwise comparison.

Transitivity (or similarity, as it is referred to in some of the literature) concerns the validity of making indirect comparisons. In our example, consider whether it is appropriate to estimate the effect of coffee versus tea via the trials that include decaf as a control. Unlike homogeneity, transitivity cannot be evaluated quantitatively. Transitivity must be evaluated by carefully reviewing the characteristics of the trials. In our example, an important consideration is whether decaf coffee can be considered equivalent to decaf tea. If decaf coffee and decaf tea are likely to lead to different responses, then an indirect comparison which assumes they are equivalent, would not be appropriate.

Consistency refers to the equivalence of direct and indirect evidence. In our example, we can estimate the effect of coffee versus tea directly from the coffee versus tea trials, or indirectly via the decaf trials. Like heterogeneity, inconsistency can be assessed both qualitatively (by reviewing the trial characteristics) and quantitatively. In our example, to assess inconsistency quantitatively, we just compare the direct estimate with the indirect estimate. For complex networks, more sophisticated methods such as node-splitting (8) can be used to assess inconsistency.

Sign up to recieve more material like this

We hope you’ve found this brief introduction to homogeneity, transitivity and consistency valuable. If you’d like to learn more about these concepts then we would recommend Salanti’s very thorough discussion (in reference (9)).

This brings us to the end of our post on the key assumptions of network meta-analysis. In order to conduct appropriate analyses and facilitate good decision making, a network meta-analysis must be based on an adequate evidence base and the trials must be similar with respect to treatment effect modifiers.

Further information

If there are any particular topics you’d like to see covered in the future then please let us know. If you are looking for an in depth intro to network meta-analysis YHEC and Quantics run joint courses – currently available in-house, on request. Some slides from one session of this course are available here.

References – An introduction to network meta-analysis (mixed treatment comparison / indirect treatment comparison)

(1) National Institute for Health and Care Excellence. Guide to the methods of technology appraisal. 2013.

(2) Hutton B et al. The PRISMA Extension Statement for Reporting of Systematic Reviews Incorporating Network Meta-analyses of Health Care Interventions: Checklist and Explanations. Annals of Internal Medicine. 2015; 162: 777-784.

(3) Higgins JP et al. The Cochrane Collaboration’s tool for assessing risk of bias in randomised trials. BMJ. 2011; 343: d5928.

(4) Egger M et al. Bias in meta-analysis detected by a simple, graphical test. BMJ. 1997; 315: 629-634.

(5) Mavridis D et al. A selection model for accounting for publication bias in a full network meta‐analysis. Statistics in Medicine. 2014; 33(30): 5399-412.

(6) Trinquart L et al. A test for reporting bias in trial networks: simulation and case studies. BMC Medical Research Methodology. 2014; 14: 112.

(7) Higgins J et al. Measuring inconsistency in meta-analyses. BMJ. 2003; 327: 557-559.

(8) Dias S et al. Checking consistency in mixed treatment comparison meta-analysis. Statistics in Medicine. 2010; 29(7-8): 932–944.

(9) Salanti G. Indirect and mixed-treatment comparison, network, or multiple-treatments meta-analysis: many names, many benefits, many concerns for the next generation evidence synthesis tool. Res Synth Methods. 2012; 3: 80–97.

References – The key assumptions of network meta-analysis

(1) Jansen JP, Fleurence R, Devine B et al. Interpreting indirect treatment comparisons and network meta-analysis for health-care decision making: report of the ISPOR Task Force on Indirect Treatment Comparisons Good Research Practices: part 1. Value Health 2011; 14: 417–428.

(2) Salanti G. Indirect and mixed-treatment comparison, network, or multiple-treatments meta-analysis: many names, many benefits, many concerns for the next generation evidence synthesis tool. Res Synth Methods 2012; 3: 80–97.

(3) Bucher HC, Guyatt GH, Griffith LE, Walter SD. The results of direct and indirect treatment comparisons in meta-analysis of randomized controlled trials. J Clin Epidemiol 1997; 50: 683–691.

(4) Lu G, Ades AE. Combination of direct and indirect evidence in mixed treatment comparisons. Stat Med 2004; 23: 3105–3124.

Comments are closed.